Training a smartcab to drive

In this project, you will work towards constructing an optimized Q-Learning driving agent that will navigate a Smartcab through its environment towards a goal. Here is the code for this project which was a part of Udacity’s Machine Learning Engineer Nanodegree.

Since the Smartcab is expected to drive passengers from one location to another, the driving agent will be evaluated on two very important metrics: Safety and Reliability. A driving agent that gets the Smartcab to its destination while running red lights or narrowly avoiding accidents would be considered unsafe. Similarly, a driving agent that frequently fails to reach the destination in time would be considered unreliable. Maximizing the driving agent’s safety and reliability would ensure that Smartcabs have a permanent place in the transportation industry.

Safety and Reliability are measured using a letter-grade system as follows:

| Grade | Safety | Reliability |

|---|---|---|

| A+ | Agent commits no traffic violations, and always chooses the correct action. | Agent reaches the destination in time for 100% of trips. |

| A | Agent commits few minor traffic violations, such as failing to move on a green light. | Agent reaches the destination on time for at least 90% of trips. |

| B | Agent commits frequent minor traffic violations, such as failing to move on a green light. | Agent reaches the destination on time for at least 80% of trips. |

| C | Agent commits at least one major traffic violation, such as driving through a red light. | Agent reaches the destination on time for at least 70% of trips. |

| D | Agent causes at least one minor accident, such as turning left on green with oncoming traffic. | Agent reaches the destination on time for at least 60% of trips. |

| F | Agent causes at least one major accident, such as driving through a red light with cross-traffic. | Agent fails to reach the destination on time for at least 60% of trips. |

To assist evaluating these important metrics, you will need to load visualization code that will be used later on in the project. Run the code cell below to import this code which is required for your analysis.

# Import the visualization codeimport visuals as vs

# Pretty display for notebooks%matplotlib inlineUnderstand the World

Before starting to work on implementing your driving agent, it’s necessary to first understand the world (environment) which the Smartcab and driving agent work in. One of the major components to building a self-learning agent is understanding the characteristics about the agent, which includes how the agent operates. To begin, simply run the agent.py agent code exactly how it is — no need to make any additions whatsoever. Let the resulting simulation run for some time to see the various working components. Note that in the visual simulation (if enabled), the white vehicle is the Smartcab.

Question 1

In a few sentences, describe what you observe during the simulation when running the default agent.py agent code. Some things you could consider:

- Does the Smartcab move at all during the simulation?

- What kind of rewards is the driving agent receiving?

- How does the light changing color affect the rewards?

Hint: From the /smartcab/ top-level directory (where this notebook is located), run the command

'python smartcab/agent.py'Answer:

- The smartcab is stationary at all times.

- The agent receives negative rewards when there is a green light in front with no oncoming traffic.

- The agent receives positive rewards when there is a green light but it shouldn’t have moved because of traffic.

- The agent receives positive rewards when there is a red light in front.

Understand the Code

In addition to understanding the world, it is also necessary to understand the code itself that governs how the world, simulation, and so on operate. Attempting to create a driving agent would be difficult without having at least explored the “hidden” devices that make everything work. In the /smartcab/ top-level directory, there are two folders: /logs/ (which will be used later) and /smartcab/. Open the /smartcab/ folder and explore each Python file included, then answer the following question.

Question 2

- In the

agent.pyPython file, choose three flags that can be set and explain how they change the simulation. - In the

environment.pyPython file, what Environment class function is called when an agent performs an action? - In the

simulator.pyPython file, what is the difference between the'render_text()'function and the'render()'function? - In the

planner.pyPython file, will the'next_waypoint()function consider the North-South or East-West direction first?

Answer:

- Here are three flags that can be set on the learning agent:

- learning: whether the agent is expected to learn

- alpha: rate at which the agent learns

- epsilon: random exploration factor (1- completely random = no learning; 0- no randomness)

- act()

- render_text() is non GUI output while render() is GUI output

- East-West direction.

Implement a Basic Driving Agent

The first step to creating an optimized Q-Learning driving agent is getting the agent to actually take valid actions. In this case, a valid action is one of None, (do nothing) 'Left' (turn left), 'Right' (turn right), or 'Forward' (go forward). For your first implementation, navigate to the 'choose_action()' agent function and make the driving agent randomly choose one of these actions. Note that you have access to several class variables that will help you write this functionality, such as 'self.learning' and 'self.valid_actions'. Once implemented, run the agent file and simulation briefly to confirm that your driving agent is taking a random action each time step.

Basic Agent Simulation Results

To obtain results from the initial simulation, you will need to adjust following flags:

'enforce_deadline'- Set this toTrueto force the driving agent to capture whether it reaches the destination in time.'update_delay'- Set this to a small value (such as0.01) to reduce the time between steps in each trial.'log_metrics'- Set this toTrueto log the simluation results as a.csvfile in/logs/.'n_test'- Set this to'10'to perform 10 testing trials.

Optionally, you may disable to the visual simulation (which can make the trials go faster) by setting the 'display' flag to False. Flags that have been set here should be returned to their default setting when debugging. It is important that you understand what each flag does and how it affects the simulation!

Once you have successfully completed the initial simulation (there should have been 20 training trials and 10 testing trials), run the code cell below to visualize the results. Note that log files are overwritten when identical simulations are run, so be careful with what log file is being loaded! Run the agent.py file after setting the flags from projects/smartcab folder instead of projects/smartcab/smartcab.

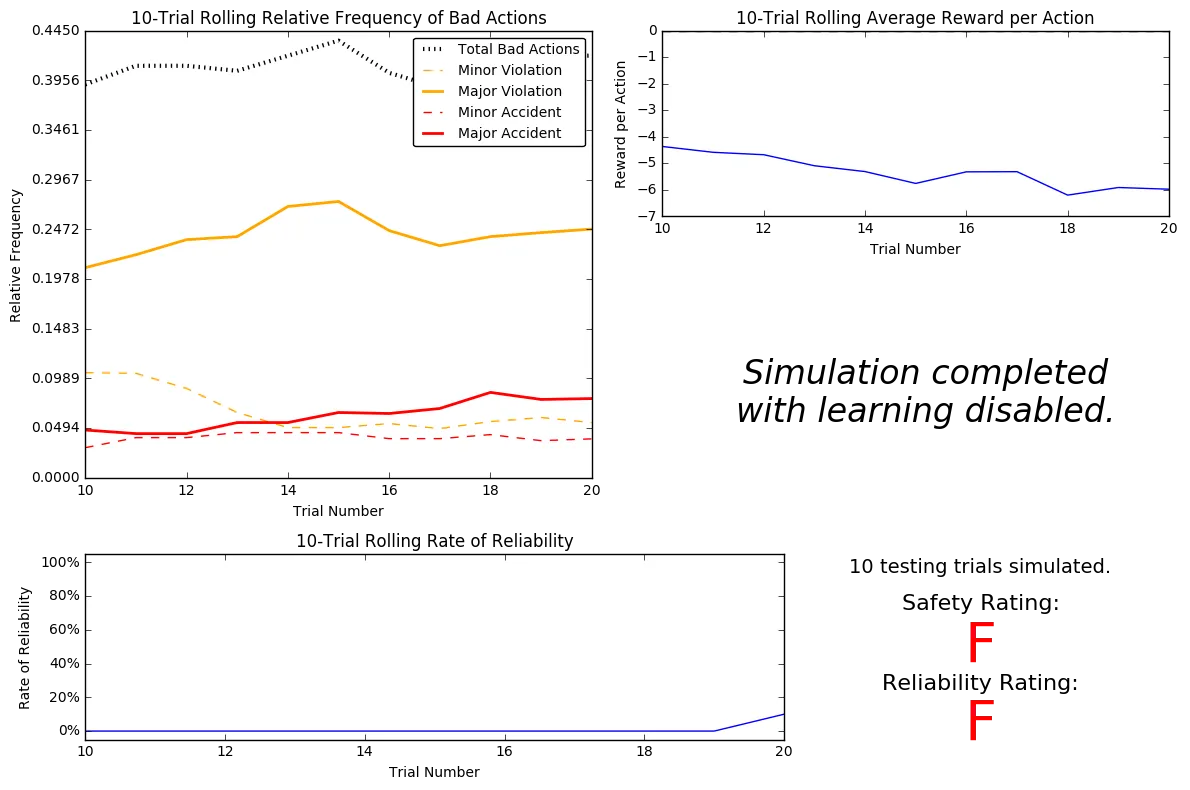

# Load the 'sim_no-learning' log file from the initial simulation resultsvs.plot_trials('sim_no-learning.csv')

Question 3

Using the visualization above that was produced from your initial simulation, provide an analysis and make several observations about the driving agent. Be sure that you are making at least one observation about each panel present in the visualization. Some things you could consider:

- How frequently is the driving agent making bad decisions? How many of those bad decisions cause accidents?

- Given that the agent is driving randomly, does the rate of reliabilty make sense?

- What kind of rewards is the agent receiving for its actions? Do the rewards suggest it has been penalized heavily?

- As the number of trials increases, does the outcome of results change significantly?

- Would this Smartcab be considered safe and/or reliable for its passengers? Why or why not?

Answer:

Relative frequency of bad actions

- Around 40% of the decisions are bad

- More than half of these comprise major violations.

- Between 5%-10% of total actions (or 12%-25% of bad actions) are in each of these categories (minor accidents, major accidents and minor violations)

Average reward per action

This is between -4 and -6. Since the actions are completely random, the agent doesn’t learn and hence penalized heavily.

Rate of reliability

- This is 0% for almost all trials. This makes sense as the agent is driving randomly.

- Rate doesn’t improve over the number trials (the slight peak at the end is a miracle) because agent doesn’t learn anything and keeps taking random actions.

This smartcab is neither safe nor reliable because it is highly unlikely to reach the destination and that is if it manages to avoid accidents.

Inform the Driving Agent

The second step to creating an optimized Q-learning driving agent is defining a set of states that the agent can occupy in the environment. Depending on the input, sensory data, and additional variables available to the driving agent, a set of states can be defined for the agent so that it can eventually learn what action it should take when occupying a state. The condition of 'if state then action' for each state is called a policy, and is ultimately what the driving agent is expected to learn. Without defining states, the driving agent would never understand which action is most optimal — or even what environmental variables and conditions it cares about!

Identify States

Inspecting the 'build_state()' agent function shows that the driving agent is given the following data from the environment:

'waypoint', which is the direction the Smartcab should drive leading to the destination, relative to the Smartcab’s heading.'inputs', which is the sensor data from the Smartcab. It includes'light', the color of the light.'left', the intended direction of travel for a vehicle to the Smartcab’s left. ReturnsNoneif no vehicle is present.'right', the intended direction of travel for a vehicle to the Smartcab’s right. ReturnsNoneif no vehicle is present.'oncoming', the intended direction of travel for a vehicle across the intersection from the Smartcab. ReturnsNoneif no vehicle is present.

'deadline', which is the number of actions remaining for the Smartcab to reach the destination before running out of time.

Question 4

Which features available to the agent are most relevant for learning both safety and efficiency? Why are these features appropriate for modeling the Smartcab in the environment? If you did not choose some features, why are those features not appropriate?

Answer:

Appropriate features

- waypoint: most important feature that affects efficiency

- light: very important for safety; can’t ever run a red light.

- oncoming: can’t make a left turn if the car in front wants to go right or forward.

- left: smartcab can turn right during red light if the car on the left is not going forward.

Features not selected

- right: it doesn’t matter what the vehicle on the right is going to do as long as the smartcab follows the light.

- deadline: no matter what the deadline is, safety comes first. This could have been useful if speed was also part of the actions.

Define a State Space

When defining a set of states that the agent can occupy, it is necessary to consider the size of the state space. That is to say, if you expect the driving agent to learn a policy for each state, you would need to have an optimal action for every state the agent can occupy. If the number of all possible states is very large, it might be the case that the driving agent never learns what to do in some states, which can lead to uninformed decisions. For example, consider a case where the following features are used to define the state of the Smartcab:

('is_raining', 'is_foggy', 'is_red_light', 'turn_left', 'no_traffic', 'previous_turn_left', 'time_of_day').

How frequently would the agent occupy a state like (False, True, True, True, False, False, '3AM')? Without a near-infinite amount of time for training, it’s doubtful the agent would ever learn the proper action!

Question 5

If a state is defined using the features you’ve selected from Question 4, what would be the size of the state space? Given what you know about the evironment and how it is simulated, do you think the driving agent could learn a policy for each possible state within a reasonable number of training trials?

Hint: Consider the combinations of features to calculate the total number of states!

Answer:

Possible values are:

- waypoint: [‘forward’, ‘left’, ‘right’]

# self.next_waypoint = random.choice(Environment.valid_actions[1:]) - light: [‘red’, ‘green’]

- oncoming: [None, ‘right’, ‘left’, ‘forward’]

- left: [None, ‘right’, ‘left’, ‘forward’]

So, the size of the state space is 3x2x4x4 = 96.

Yes I think so- this is a reasonably small number of states.

Update the Driving Agent State

For your second implementation, navigate to the 'build_state()' agent function. With the justification you’ve provided in Question 4, you will now set the 'state' variable to a tuple of all the features necessary for Q-Learning. Confirm your driving agent is updating its state by running the agent file and simulation briefly and note whether the state is displaying. If the visual simulation is used, confirm that the updated state corresponds with what is seen in the simulation.

Note: Remember to reset simulation flags to their default setting when making this observation!

Implement a Q-Learning Driving Agent

The third step to creating an optimized Q-Learning agent is to begin implementing the functionality of Q-Learning itself. The concept of Q-Learning is fairly straightforward: For every state the agent visits, create an entry in the Q-table for all state-action pairs available. Then, when the agent encounters a state and performs an action, update the Q-value associated with that state-action pair based on the reward received and the interative update rule implemented. Of course, additional benefits come from Q-Learning, such that we can have the agent choose the best action for each state based on the Q-values of each state-action pair possible. For this project, you will be implementing a decaying, $\epsilon$-greedy Q-learning algorithm with no discount factor. Follow the implementation instructions under each TODO in the agent functions.

Note that the agent attribute self.Q is a dictionary: This is how the Q-table will be formed. Each state will be a key of the self.Q dictionary, and each value will then be another dictionary that holds the action and Q-value. Here is an example:

{ 'state-1': { 'action-1' : Qvalue-1, 'action-2' : Qvalue-2, ... }, 'state-2': { 'action-1' : Qvalue-1, ... }, ...}Furthermore, note that you are expected to use a decaying $\epsilon$ (exploration) factor. Hence, as the number of trials increases, $\epsilon$ should decrease towards 0. This is because the agent is expected to learn from its behavior and begin acting on its learned behavior. Additionally, The agent will be tested on what it has learned after $\epsilon$ has passed a certain threshold (the default threshold is 0.01). For the initial Q-Learning implementation, you will be implementing a linear decaying function for $\epsilon$.

Q-Learning Simulation Results

To obtain results from the initial Q-Learning implementation, you will need to adjust the following flags and setup:

'enforce_deadline'- Set this toTrueto force the driving agent to capture whether it reaches the destination in time.'update_delay'- Set this to a small value (such as0.01) to reduce the time between steps in each trial.'log_metrics'- Set this toTrueto log the simluation results as a.csvfile and the Q-table as a.txtfile in/logs/.'n_test'- Set this to'10'to perform 10 testing trials.'learning'- Set this to'True'to tell the driving agent to use your Q-Learning implementation.

In addition, use the following decay function for $\epsilon$:

$$ \epsilon*{t+1} = \epsilon*{t} - 0.05, \hspace{10px}\textrm{for trial number } t$$

If you have difficulty getting your implementation to work, try setting the 'verbose' flag to True to help debug. Flags that have been set here should be returned to their default setting when debugging. It is important that you understand what each flag does and how it affects the simulation!

Once you have successfully completed the initial Q-Learning simulation, run the code cell below to visualize the results. Note that log files are overwritten when identical simulations are run, so be careful with what log file is being loaded!

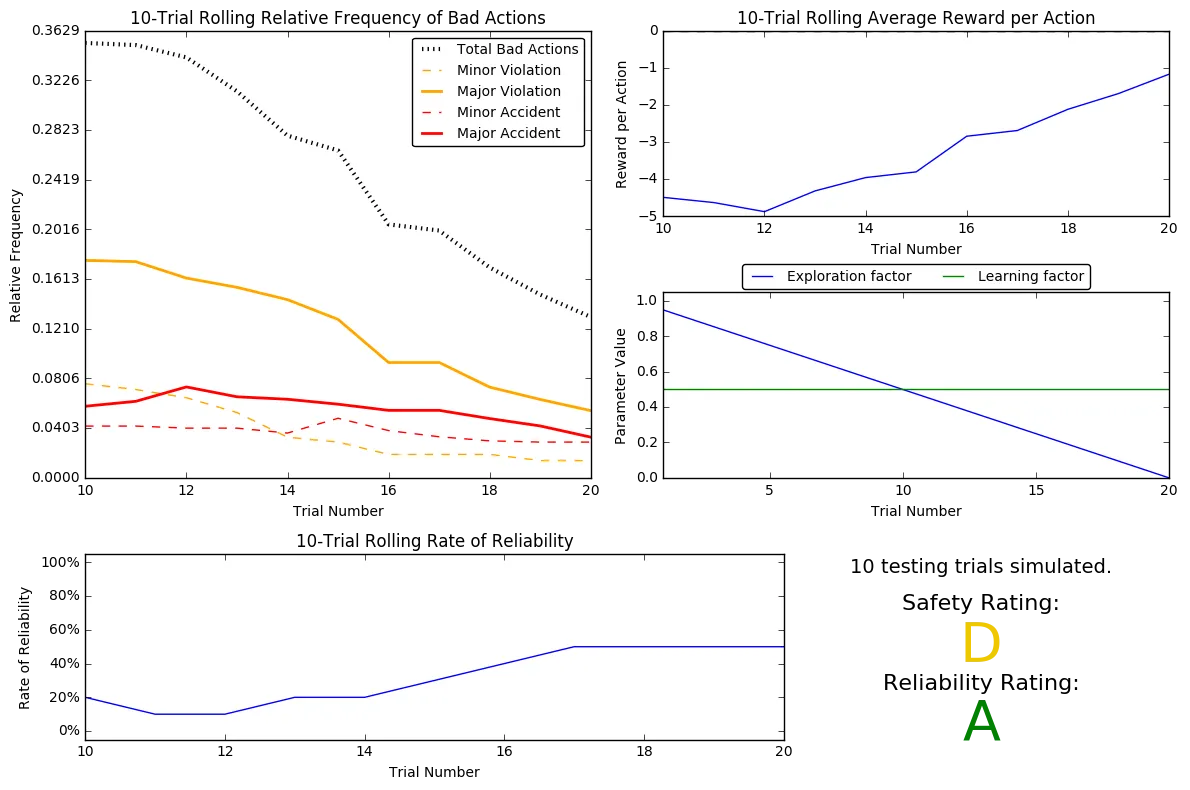

# Load the 'sim_default-learning' file from the default Q-Learning simulationvs.plot_trials('sim_default-learning.csv')

Question 6

Using the visualization above that was produced from your default Q-Learning simulation, provide an analysis and make observations about the driving agent like in Question 3. Note that the simulation should have also produced the Q-table in a text file which can help you make observations about the agent’s learning. Some additional things you could consider:

- Are there any observations that are similar between the basic driving agent and the default Q-Learning agent?

- Approximately how many training trials did the driving agent require before testing? Does that number make sense given the epsilon-tolerance?

- Is the decaying function you implemented for $\epsilon$ (the exploration factor) accurately represented in the parameters panel?

- As the number of training trials increased, did the number of bad actions decrease? Did the average reward increase?

- How does the safety and reliability rating compare to the initial driving agent?

Answer:

Relative frequency of bad actions

- Around 36% of the decisions are bad initially but this came down to around 13%.

- Major and minor violations kept reducing with the number of trials.

- Rate of accidents did not seem to improve.

Average reward per action

This was between -4 and -5 initially but kept increasing almost linearly until the trials were stopped.

Exploration and learning factors

- Learning factor (alpha) was a constant throughout.

- Expoloration factor (epsilon) reduced linearly because we used $\epsilon$ -= 0.05

- As the threshold was 0.05, number of training trials was 20. (Solving for n: 1-n(0.05) < 0.05; n>19)

Rate of reliability

- This started between 0-10% but improved a little before stagnating just above 40%.

This smartcab is somewhat more reliable than the initial driving agent but there is no marked improvement in safety.

Improve the Q-Learning Driving Agent

The third step to creating an optimized Q-Learning agent is to perform the optimization! Now that the Q-Learning algorithm is implemented and the driving agent is successfully learning, it’s necessary to tune settings and adjust learning paramaters so the driving agent learns both safety and efficiency. Typically this step will require a lot of trial and error, as some settings will invariably make the learning worse. One thing to keep in mind is the act of learning itself and the time that this takes: In theory, we could allow the agent to learn for an incredibly long amount of time; however, another goal of Q-Learning is to transition from experimenting with unlearned behavior to acting on learned behavior. For example, always allowing the agent to perform a random action during training (if $\epsilon = 1$ and never decays) will certainly make it learn, but never let it act. When improving on your Q-Learning implementation, consider the impliciations it creates and whether it is logistically sensible to make a particular adjustment.

Improved Q-Learning Simulation Results

To obtain results from the initial Q-Learning implementation, you will need to adjust the following flags and setup:

'enforce_deadline'- Set this toTrueto force the driving agent to capture whether it reaches the destination in time.'update_delay'- Set this to a small value (such as0.01) to reduce the time between steps in each trial.'log_metrics'- Set this toTrueto log the simluation results as a.csvfile and the Q-table as a.txtfile in/logs/.'learning'- Set this to'True'to tell the driving agent to use your Q-Learning implementation.'optimized'- Set this to'True'to tell the driving agent you are performing an optimized version of the Q-Learning implementation.

Additional flags that can be adjusted as part of optimizing the Q-Learning agent:

'n_test'- Set this to some positive number (previously 10) to perform that many testing trials.'alpha'- Set this to a real number between 0 - 1 to adjust the learning rate of the Q-Learning algorithm.'epsilon'- Set this to a real number between 0 - 1 to adjust the starting exploration factor of the Q-Learning algorithm.'tolerance'- set this to some small value larger than 0 (default was 0.05) to set the epsilon threshold for testing.

Furthermore, use a decaying function of your choice for $\epsilon$ (the exploration factor). Note that whichever function you use, it must decay to 'tolerance' at a reasonable rate. The Q-Learning agent will not begin testing until this occurs. Some example decaying functions (for $t$, the number of trials):

$$ \epsilon = a^t, \textrm{for } 0 < a < 1 \hspace{50px}\epsilon = \frac{1}{t^2}\hspace{50px}\epsilon = e^{-at}, \textrm{for } 0 < a < 1 \hspace{50px} \epsilon = \cos(at), \textrm{for } 0 < a < 1$$ You may also use a decaying function for $\alpha$ (the learning rate) if you so choose, however this is typically less common. If you do so, be sure that it adheres to the inequality $0 \leq \alpha \leq 1$.

If you have difficulty getting your implementation to work, try setting the 'verbose' flag to True to help debug. Flags that have been set here should be returned to their default setting when debugging. It is important that you understand what each flag does and how it affects the simulation!

Once you have successfully completed the improved Q-Learning simulation, run the code cell below to visualize the results. Note that log files are overwritten when identical simulations are run, so be careful with what log file is being loaded!

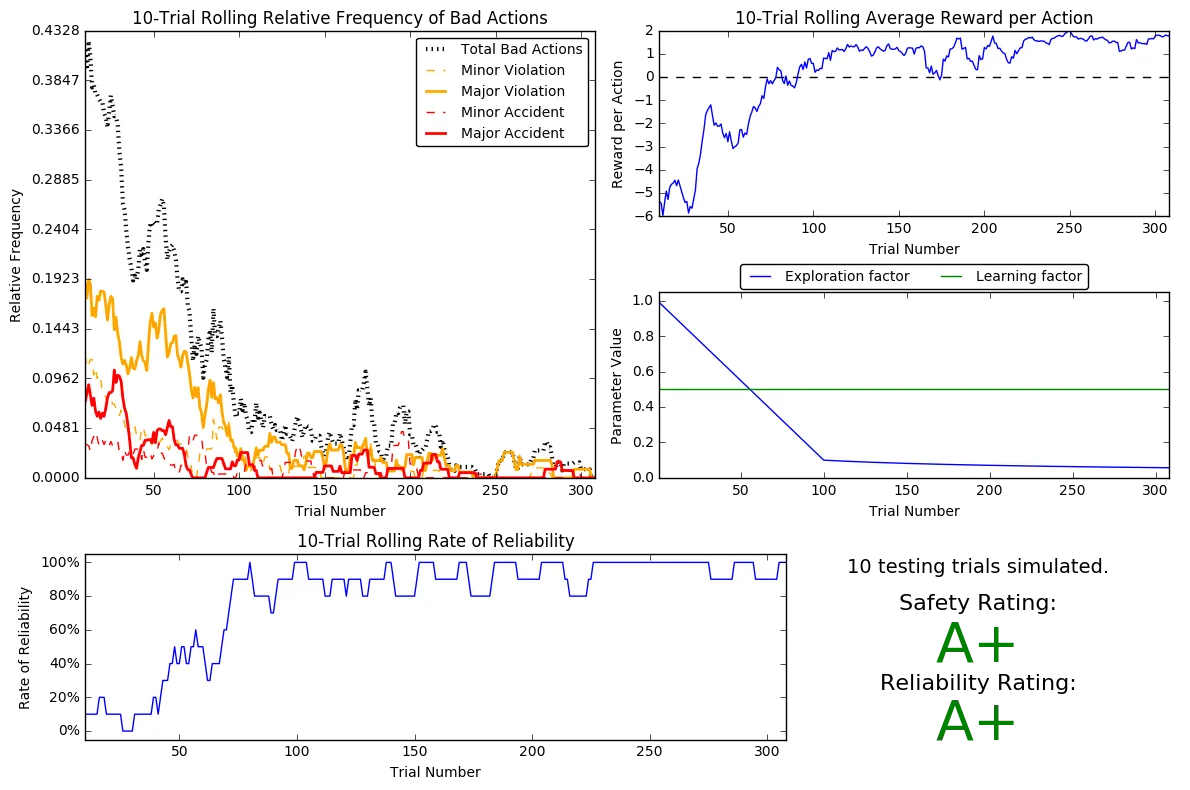

# Load the 'sim_improved-learning' file from the improved Q-Learning simulationvs.plot_trials('sim_improved-learning.csv')

Question 7

Using the visualization above that was produced from your improved Q-Learning simulation, provide a final analysis and make observations about the improved driving agent like in Question 6. Questions you should answer:

- What decaying function was used for epsilon (the exploration factor)?

- Approximately how many training trials were needed for your agent before begining testing?

- What epsilon-tolerance and alpha (learning rate) did you use? Why did you use them?

- How much improvement was made with this Q-Learner when compared to the default Q-Learner from the previous section?

- Would you say that the Q-Learner results show that your driving agent successfully learned an appropriate policy?

- Are you satisfied with the safety and reliability ratings of the Smartcab?

Answer:

I did not try the first suggested example as I left alpha as a constant (0.5)

$\epsilon$ = 1/t:

Exploration factor reduced too quickly, so couldn’t explore much and couldn’t learn much so the ratings were horrible.

$\epsilon$ = 1/t0.5

Decreasing the rate of increase of denominator to decrease the rate of decaly of epsilon, above function resulted in Safety rating of A+ and Reliability rating of A.

Number of trials was 400, which seems too much from the graphs as there was no drastic improvement after around 300 trials.

Picking tolerance = 0.057 (from sim_improved-learning.csv) to get around 300 training trials produced A+ rating for both safety and reliability. I was just trying to reduce the number of training trials but looks like it had the nice effect of increasing the reliability rating too- likely because it was training too much when there were 400 trials.

There were a sizeable number of accidents/violations even when epsilon was close to 0- because epsilon decayed too rapidly to a value around 0.1. I reduced the rate of epsilon decay until it reached 0.1 by reducing it linearly for 100 trials to get to 0.1.

So the final decaying function used is:

$\epsilon$ = $\epsilon$ - 0.009, t<=100

$\epsilon$ = 1/t0.5, otherwise

Relative frequency of bad actions

- Around 40% of the decisions are bad initially but this came down to almost 2%.

- Major and minor violations kept reducing with the number of trials.

- Rate of accidents did not seem to improve in the previous case but it came down to almost 2% in this case.

Average reward per action

This was between -4 and -5 initially but kept increasing to reach positive values (between 1 and 2) until the trials were stopped. In the initial case, there was no improvement here at all.

Exploration and learning factors

- Learning factor (alpha) was a constant throughout.

- Expoloration factor (epsilon) reduced linearly until it reached 0.1 but at a much slower rate than before.

- It reduced at an even slower rate after that until it reached 0.05.

- Instead of just 20 trials, the total trials is around 300.

Rate of reliability

- This started between 0-10% but improved to more than 80% after around 100 trials. This did not cross 40% previously.

I am very satisfied with the A+ ratings and the results show that the driving agent successfully learned an appropriate policy.

Define an Optimal Policy

Sometimes, the answer to the important question “what am I trying to get my agent to learn?” only has a theoretical answer and cannot be concretely described. Here, however, you can concretely define what it is the agent is trying to learn, and that is the U.S. right-of-way traffic laws. Since these laws are known information, you can further define, for each state the Smartcab is occupying, the optimal action for the driving agent based on these laws. In that case, we call the set of optimal state-action pairs an optimal policy. Hence, unlike some theoretical answers, it is clear whether the agent is acting “incorrectly” not only by the reward (penalty) it receives, but also by pure observation. If the agent drives through a red light, we both see it receive a negative reward but also know that it is not the correct behavior. This can be used to your advantage for verifying whether the policy your driving agent has learned is the correct one, or if it is a suboptimal policy.

Question 8

Provide a few examples (using the states you’ve defined) of what an optimal policy for this problem would look like. Afterwards, investigate the 'sim_improved-learning.txt' text file to see the results of your improved Q-Learning algorithm. For each state that has been recorded from the simulation, is the policy (the action with the highest value) correct for the given state? Are there any states where the policy is different than what would be expected from an optimal policy? Provide an example of a state and all state-action rewards recorded, and explain why it is the correct policy.

Answer:

Here is a subset of what the optimum policy should look like- state is (waypoint, light, left, oncoming):

- (Forward, Green, *, *) = Forward

- (Forward, Red, *, *) = None

- (Left, Green, None, None) = Left

- (Right, Green, *, *) = Right

sim_improved-learning.txt:

Looks like most of the policies are correct.

Here’s a correct policy:

(‘forward’, ‘green’, None, None)

- forward : 1.48

- right : 1.06

- None : -4.98

- left : 0.47

Action with the highest Q value is forward- this is the correct action as the smartcab is supposed to go forward when the light is green no matter what other vehicles are planning to do- in this case there are no vehicles to the left or in front.

Here are a couple of wrong policies:

Instead of going forward, the smartcab has learned to go right instead.

(‘forward’, ‘green’, ‘left’, ‘right’)

- forward : 0.00

- right : 0.82

- None : -4.20

- left : 0.00

(‘forward’, ‘green’, ‘forward’, ‘right’)

- forward : 0.00

- right : 0.73

- None : 0.00

- left : 0.00

Optional: Future Rewards - Discount Factor, 'gamma'

Curiously, as part of the Q-Learning algorithm, you were asked to not use the discount factor, 'gamma' in the implementation. Including future rewards in the algorithm is used to aid in propogating positive rewards backwards from a future state to the current state. Essentially, if the driving agent is given the option to make several actions to arrive at different states, including future rewards will bias the agent towards states that could provide even more rewards. An example of this would be the driving agent moving towards a goal: With all actions and rewards equal, moving towards the goal would theoretically yield better rewards if there is an additional reward for reaching the goal. However, even though in this project, the driving agent is trying to reach a destination in the allotted time, including future rewards will not benefit the agent. In fact, if the agent were given many trials to learn, it could negatively affect Q-values!

Optional Question 9

There are two characteristics about the project that invalidate the use of future rewards in the Q-Learning algorithm. One characteristic has to do with the Smartcab itself, and the other has to do with the environment. Can you figure out what they are and why future rewards won’t work for this project?

Answer:

The smartcab has no way of knowing what the next state would be- waypoint can be found for the future state but it is difficult to know the ‘light’, ‘left’ and ‘oncoming’ parameters as they are mostly random. Also, it doesn’t know where it is in the environment which would reflect how far away it is from the destination. The smartcab can only make its decision based on what it knows- which is limited to what’s happening at the intersection it is in.

Although the destination changes for each trial, I am not sure this is relevant because the state doesn’t capture the actual location of the destination. Waypoint could be same for more than one destinations. The smartcab actually just learns whether or not to take the action suggested by waypoint for a given state.