Classifying images using a simple CNN

In this project, you’ll classify images from the CIFAR-10 dataset. The dataset consists of airplanes, dogs, cats, and other objects. You’ll preprocess the images, then train a convolutional neural network on all the samples. The images need to be normalized and the labels need to be one-hot encoded. You’ll get to apply what you learned and build a convolutional, max pooling, dropout, and fully connected layers. At the end, you’ll get to see your neural network’s predictions on the sample images.

Here is the code for this project which was a part of Udacity’s Machine Learning Engineer Nanodegree.

Get the Data

Run the following cell to download the CIFAR-10 dataset for python.

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""from urllib.request import urlretrievefrom os.path import isfile, isdirfrom tqdm import tqdmimport problem_unittests as testsimport tarfile

cifar10_dataset_folder_path = 'cifar-10-batches-py'

class DLProgress(tqdm): last_block = 0

def hook(self, block_num=1, block_size=1, total_size=None): self.total = total_size self.update((block_num - self.last_block) * block_size) self.last_block = block_num

if not isfile('cifar-10-python.tar.gz'): with DLProgress(unit='B', unit_scale=True, miniters=1, desc='CIFAR-10 Dataset') as pbar: urlretrieve( 'https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz', 'cifar-10-python.tar.gz', pbar.hook)

if not isdir(cifar10_dataset_folder_path): with tarfile.open('cifar-10-python.tar.gz') as tar: tar.extractall() tar.close()

tests.test_folder_path(cifar10_dataset_folder_path)All files found!Explore the Data

The dataset is broken into batches to prevent your machine from running out of memory. The CIFAR-10 dataset consists of 5 batches, named data_batch_1, data_batch_2, etc.. Each batch contains the labels and images that are one of the following:

- airplane

- automobile

- bird

- cat

- deer

- dog

- frog

- horse

- ship

- truck

Understanding a dataset is part of making predictions on the data. Play around with the code cell below by changing the batch_id and sample_id. The batch_id is the id for a batch (1-5). The sample_id is the id for a image and label pair in the batch.

Ask yourself “What are all possible labels?”, “What is the range of values for the image data?”, “Are the labels in order or random?“. Answers to questions like these will help you preprocess the data and end up with better predictions.

%matplotlib inline%config InlineBackend.figure_format = 'retina'

import helperimport numpy as np

# Explore the datasetbatch_id = 5sample_id = 5000helper.display_stats(cifar10_dataset_folder_path, batch_id, sample_id)Stats of batch 5:Samples: 10000Label Counts: {0: 1014, 1: 1014, 2: 952, 3: 1016, 4: 997, 5: 1025, 6: 980, 7: 977, 8: 1003, 9: 1022}First 20 Labels: [1, 8, 5, 1, 5, 7, 4, 3, 8, 2, 7, 2, 0, 1, 5, 9, 6, 2, 0, 8]

Example of Image 5000:Image - Min Value: 0 Max Value: 255Image - Shape: (32, 32, 3)Label - Label Id: 7 Name: horse

Implement Preprocess Functions

Normalize

In the cell below, implement the normalize function to take in image data, x, and return it as a normalized Numpy array. The values should be in the range of 0 to 1, inclusive. The return object should be the same shape as x.

from sklearn import preprocessing

def normalize(x): """ Normalize a list of sample image data in the range of 0 to 1 : x: List of image data. The image shape is (32, 32, 3) : return: Numpy array of normalize data """ # TODO: Implement Function

# print (x.shape) - looks like x.shape[0] is the number of images # print (x.max(), x.min()) - As expected this is 255, 0 # print (x[0]) - first image

# Reshaping to 2D array to use normalize function _x = (x.reshape(x.shape[0], -1)) # print (_x[0]) - first image

# Normalize 2D array and convert back to orignal shape # *1.0 Coverts int values to float return preprocessing.normalize(_x * 1.0).reshape(x.shape)

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_normalize(normalize)Tests PassedOne-hot encode

Just like the previous code cell, you’ll be implementing a function for preprocessing. This time, you’ll implement the one_hot_encode function. The input, x, are a list of labels. Implement the function to return the list of labels as One-Hot encoded Numpy array. The possible values for labels are 0 to 9. The one-hot encoding function should return the same encoding for each value between each call to one_hot_encode. Make sure to save the map of encodings outside the function.

Hint:

Look into LabelBinarizer in the preprocessing module of sklearn.

def one_hot_encode(x): """ One hot encode a list of sample labels. Return a one-hot encoded vector for each label. : x: List of sample Labels : return: Numpy array of one-hot encoded labels """ # TODO: Implement Function

labels = range(10) label_binarizer = preprocessing.LabelBinarizer().fit(labels) return (label_binarizer.transform(x))

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_one_hot_encode(one_hot_encode)Tests PassedRandomize Data

As you saw from exploring the data above, the order of the samples are randomized. It doesn’t hurt to randomize it again, but you don’t need to for this dataset.

Preprocess all the data and save it

Running the code cell below will preprocess all the CIFAR-10 data and save it to file. The code below also uses 10% of the training data for validation.

"""DON'T MODIFY ANYTHING IN THIS CELL"""# Preprocess Training, Validation, and Testing Datahelper.preprocess_and_save_data(cifar10_dataset_folder_path, normalize, one_hot_encode)Check Point

This is your first checkpoint. If you ever decide to come back to this notebook or have to restart the notebook, you can start from here. The preprocessed data has been saved to disk.

"""DON'T MODIFY ANYTHING IN THIS CELL"""import pickleimport problem_unittests as testsimport helper

# Load the Preprocessed Validation datavalid_features, valid_labels = pickle.load(open('preprocess_validation.p', mode='rb'))Build the network

For the neural network, you’ll build each layer into a function. Most of the code you’ve seen has been outside of functions. To test your code more thoroughly, we require that you put each layer in a function. This allows us to give you better feedback and test for simple mistakes using our unittests before you submit your project.

Note: If you’re finding it hard to dedicate enough time for this course each week, we’ve provided a small shortcut to this part of the project. In the next couple of problems, you’ll have the option to use classes from the TensorFlow Layers or TensorFlow Layers (contrib) packages to build each layer, except the layers you build in the “Convolutional and Max Pooling Layer” section. TF Layers is similar to Keras’s and TFLearn’s abstraction to layers, so it’s easy to pickup.

However, if you would like to get the most out of this course, try to solve all the problems without using anything from the TF Layers packages. You can still use classes from other packages that happen to have the same name as ones you find in TF Layers! For example, instead of using the TF Layers version of the

conv2dclass, tf.layers.conv2d, you would want to use the TF Neural Network version ofconv2d, tf.nn.conv2d.

Let’s begin!

Input

The neural network needs to read the image data, one-hot encoded labels, and dropout keep probability. Implement the following functions

- Implement

neural_net_image_input - Return a TF Placeholder

- Set the shape using

image_shapewith batch size set toNone. - Name the TensorFlow placeholder “x” using the TensorFlow

nameparameter in the TF Placeholder. - Implement

neural_net_label_input - Return a TF Placeholder

- Set the shape using

n_classeswith batch size set toNone. - Name the TensorFlow placeholder “y” using the TensorFlow

nameparameter in the TF Placeholder. - Implement

neural_net_keep_prob_input - Return a TF Placeholder for dropout keep probability.

- Name the TensorFlow placeholder “keep_prob” using the TensorFlow

nameparameter in the TF Placeholder.

These names will be used at the end of the project to load your saved model.

Note: None for shapes in TensorFlow allow for a dynamic size.

import tensorflow as tf

def neural_net_image_input(image_shape): """ Return a Tensor for a batch of image input : image_shape: Shape of the images : return: Tensor for image input. """ # TODO: Implement Function return tf.placeholder(tf.float32, shape = ((None, ) + image_shape), name = 'x')

def neural_net_label_input(n_classes): """ Return a Tensor for a batch of label input : n_classes: Number of classes : return: Tensor for label input. """ # TODO: Implement Function return tf.placeholder(tf.float32, shape = (None, n_classes), name = 'y')

def neural_net_keep_prob_input(): """ Return a Tensor for keep probability : return: Tensor for keep probability. """ # TODO: Implement Function return tf.placeholder(tf.float32, name = 'keep_prob')

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tf.reset_default_graph()tests.test_nn_image_inputs(neural_net_image_input)tests.test_nn_label_inputs(neural_net_label_input)tests.test_nn_keep_prob_inputs(neural_net_keep_prob_input)Image Input Tests Passed.Label Input Tests Passed.Keep Prob Tests Passed.Convolution and Max Pooling Layer

Convolution layers have a lot of success with images. For this code cell, you should implement the function conv2d_maxpool to apply convolution then max pooling:

- Create the weight and bias using

conv_ksize,conv_num_outputsand the shape ofx_tensor. - Apply a convolution to

x_tensorusing weight andconv_strides. - We recommend you use same padding, but you’re welcome to use any padding.

- Add bias

- Add a nonlinear activation to the convolution.

- Apply Max Pooling using

pool_ksizeandpool_strides. - We recommend you use same padding, but you’re welcome to use any padding.

Note: You can’t use TensorFlow Layers or TensorFlow Layers (contrib) for this layer, but you can still use TensorFlow’s Neural Network package. You may still use the shortcut option for all the other layers.

** Hint: **

When unpacking values as an argument in Python, look into the unpacking operator.

def conv2d_maxpool(x_tensor, conv_num_outputs, conv_ksize, conv_strides, pool_ksize, pool_strides): """ Apply convolution then max pooling to x_tensor :param x_tensor: TensorFlow Tensor :param conv_num_outputs: Number of outputs for the convolutional layer :param conv_ksize: kernal size 2-D Tuple for the convolutional layer :param conv_strides: Stride 2-D Tuple for convolution :param pool_ksize: kernal size 2-D Tuple for pool :param pool_strides: Stride 2-D Tuple for pool : return: A tensor that represents convolution and max pooling of x_tensor """ # TODO: Implement Function # print (conv_ksize) # print (x_tensor.get_shape())

weight_shape = [*conv_ksize, x_tensor.get_shape().as_list()[3], conv_num_outputs] # print (weight_shape) weight = tf.Variable(tf.truncated_normal(weight_shape, stddev = 0.1))

# bias = tf.Variable(tf.truncated_normal([conv_num_outputs])) bias = tf.Variable(tf.zeros(conv_num_outputs))

# print (conv_strides)

# Convolution output = tf.nn.conv2d(input = x_tensor, filter = weight, strides = [1, *conv_strides, 1], padding = 'SAME') output = tf.nn.bias_add(output, bias) output = tf.nn.relu(output)

# print (pool_ksize, pool_strides) # Max Pooling output = tf.nn.max_pool(value = output, ksize = [1, *pool_ksize, 1], strides = [1, *pool_strides, 1], padding = 'SAME')

return output

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_con_pool(conv2d_maxpool)Tests PassedFlatten Layer

Implement the flatten function to change the dimension of x_tensor from a 4-D tensor to a 2-D tensor. The output should be the shape (Batch Size, Flattened Image Size). Shortcut option: you can use classes from the TensorFlow Layers or TensorFlow Layers (contrib) packages for this layer. For more of a challenge, only use other TensorFlow packages.

import helperimport numpy as np

def flatten(x_tensor): """ Flatten x_tensor to (Batch Size, Flattened Image Size) : x_tensor: A tensor of size (Batch Size, ...), where ... are the image dimensions. : return: A tensor of size (Batch Size, Flattened Image Size). """ # TODO: Implement Function

x_tensor_shape = x_tensor.get_shape().as_list() # print (x_tensor_shape) flat_shape = np.prod(np.array(x_tensor_shape[1:]))

return tf.reshape(x_tensor, [-1, flat_shape])

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_flatten(flatten)Tests PassedFully-Connected Layer

Implement the fully_conn function to apply a fully connected layer to x_tensor with the shape (Batch Size, num_outputs). Shortcut option: you can use classes from the TensorFlow Layers or TensorFlow Layers (contrib) packages for this layer. For more of a challenge, only use other TensorFlow packages.

def fully_conn(x_tensor, num_outputs): """ Apply a fully connected layer to x_tensor using weight and bias : x_tensor: A 2-D tensor where the first dimension is batch size. : num_outputs: The number of output that the new tensor should be. : return: A 2-D tensor where the second dimension is num_outputs. """ # TODO: Implement Function flat_shape = np.prod(np.array(x_tensor.get_shape().as_list()[1:])) weight = tf.Variable(tf.truncated_normal([flat_shape, num_outputs])) #bias = tf.Variable(tf.truncated_normal([num_outputs])) bias = tf.Variable(tf.zeros([num_outputs]))

return tf.nn.relu(tf.add(tf.matmul(x_tensor, weight), bias))

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_fully_conn(fully_conn)Tests PassedOutput Layer

Implement the output function to apply a fully connected layer to x_tensor with the shape (Batch Size, num_outputs). Shortcut option: you can use classes from the TensorFlow Layers or TensorFlow Layers (contrib) packages for this layer. For more of a challenge, only use other TensorFlow packages.

Note: Activation, softmax, or cross entropy should not be applied to this.

def output(x_tensor, num_outputs): """ Apply a output layer to x_tensor using weight and bias : x_tensor: A 2-D tensor where the first dimension is batch size. : num_outputs: The number of output that the new tensor should be. : return: A 2-D tensor where the second dimension is num_outputs. """ # TODO: Implement Function flat_shape = np.prod(np.array(x_tensor.get_shape().as_list()[1:])) weight = tf.Variable(tf.truncated_normal([flat_shape, num_outputs], stddev = 0.1)) # bias = tf.Variable(tf.truncated_normal([num_outputs])) bias = tf.Variable(tf.zeros(num_outputs))

return tf.add(tf.matmul(x_tensor, weight), bias)

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_output(output)Tests PassedCreate Convolutional Model

Implement the function conv_net to create a convolutional neural network model. The function takes in a batch of images, x, and outputs logits. Use the layers you created above to create this model:

- Apply 1, 2, or 3 Convolution and Max Pool layers

- Apply a Flatten Layer

- Apply 1, 2, or 3 Fully Connected Layers

- Apply an Output Layer

- Return the output

- Apply TensorFlow’s Dropout to one or more layers in the model using

keep_prob.

def conv_net(x, keep_prob): """ Create a convolutional neural network model : x: Placeholder tensor that holds image data. : keep_prob: Placeholder tensor that hold dropout keep probability. : return: Tensor that represents logits """ # TODO: Apply 1, 2, or 3 Convolution and Max Pool layers # Play around with different number of outputs, kernel size and stride # Function Definition from Above: # conv2d_maxpool(x_tensor, conv_num_outputs, conv_ksize, conv_strides, pool_ksize, pool_strides) result = conv2d_maxpool(x, conv_num_outputs = 64, conv_ksize = [5, 5], conv_strides = [2, 2], pool_ksize = [3, 3], pool_strides = [2, 2])

result = conv2d_maxpool(result, conv_num_outputs = 128, conv_ksize = [5, 5], conv_strides = [2, 2], pool_ksize = [3, 3], pool_strides = [2, 2]) # result = tf.nn.dropout(result, keep_prob)

# TODO: Apply a Flatten Layer # Function Definition from Above: # flatten(x_tensor) result = flatten(result) # result = tf.nn.dropout(result, keep_prob)

# TODO: Apply 1, 2, or 3 Fully Connected Layers # Play around with different number of outputs # Function Definition from Above: # fully_conn(x_tensor, num_outputs) result = fully_conn(result, 256) result = fully_conn(result, 128)

result = tf.nn.dropout(result, keep_prob)

# TODO: Apply an Output Layer # Set this to the number of classes # Function Definition from Above: # output(x_tensor, num_outputs) result = output(result, 10)

# TODO: return output return result

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""

################################ Build the Neural Network ################################

# Remove previous weights, bias, inputs, etc..tf.reset_default_graph()

# Inputsx = neural_net_image_input((32, 32, 3))y = neural_net_label_input(10)keep_prob = neural_net_keep_prob_input()

# Modellogits = conv_net(x, keep_prob)

# Name logits Tensor, so that is can be loaded from disk after traininglogits = tf.identity(logits, name='logits')

# Loss and Optimizercost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=logits, labels=y))optimizer = tf.train.AdamOptimizer().minimize(cost)

# Accuracycorrect_pred = tf.equal(tf.argmax(logits, 1), tf.argmax(y, 1))accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32), name='accuracy')

tests.test_conv_net(conv_net)Neural Network Built!Train the Neural Network

Single Optimization

Implement the function train_neural_network to do a single optimization. The optimization should use optimizer to optimize in session with a feed_dict of the following:

xfor image inputyfor labelskeep_probfor keep probability for dropout

This function will be called for each batch, so tf.global_variables_initializer() has already been called.

Note: Nothing needs to be returned. This function is only optimizing the neural network.

def train_neural_network(session, optimizer, keep_probability, feature_batch, label_batch): """ Optimize the session on a batch of images and labels : session: Current TensorFlow session : optimizer: TensorFlow optimizer function : keep_probability: keep probability : feature_batch: Batch of Numpy image data : label_batch: Batch of Numpy label data """ # TODO: Implement Function session.run(optimizer, feed_dict = {x: feature_batch, y: label_batch, keep_prob: keep_probability})

"""DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE"""tests.test_train_nn(train_neural_network)Tests PassedShow Stats

Implement the function print_stats to print loss and validation accuracy. Use the global variables valid_features and valid_labels to calculate validation accuracy. Use a keep probability of 1.0 to calculate the loss and validation accuracy.

def print_stats(session, feature_batch, label_batch, cost, accuracy): """ Print information about loss and validation accuracy : session: Current TensorFlow session : feature_batch: Batch of Numpy image data : label_batch: Batch of Numpy label data : cost: TensorFlow cost function : accuracy: TensorFlow accuracy function """ # TODO: Implement Function loss = session.run(cost, feed_dict = {x: feature_batch, y: label_batch, keep_prob: 1.0}) validation_accuracy = session.run(accuracy, feed_dict = {x: valid_features, y: valid_labels, keep_prob: 1.0})

print('Loss: {:.4f} Validation Accuracy: {:.6f}'.format(loss, validation_accuracy)) passHyperparameters

Tune the following parameters:

- Set

epochsto the number of iterations until the network stops learning or start overfitting - Set

batch_sizeto the highest number that your machine has memory for. Most people set them to common sizes of memory: - 64

- 128

- 256

- …

- Set

keep_probabilityto the probability of keeping a node using dropout

# TODO: Tune Parametersepochs = 10batch_size = 128keep_probability = 0.7Train on a Single CIFAR-10 Batch

Instead of training the neural network on all the CIFAR-10 batches of data, let’s use a single batch. This should save time while you iterate on the model to get a better accuracy. Once the final validation accuracy is 50% or greater, run the model on all the data in the next section.

"""DON'T MODIFY ANYTHING IN THIS CELL"""print('Checking the Training on a Single Batch...')with tf.Session() as sess: # Initializing the variables sess.run(tf.global_variables_initializer())

# Training cycle for epoch in range(epochs): batch_i = 1 for batch_features, batch_labels in helper.load_preprocess_training_batch(batch_i, batch_size): train_neural_network(sess, optimizer, keep_probability, batch_features, batch_labels) print('Epoch {:>2}, CIFAR-10 Batch {}: '.format(epoch + 1, batch_i), end='') print_stats(sess, batch_features, batch_labels, cost, accuracy)Checking the Training on a Single Batch...Epoch 1, CIFAR-10 Batch 1: Loss: 2.0513 Validation Accuracy: 0.276600Epoch 2, CIFAR-10 Batch 1: Loss: 1.7966 Validation Accuracy: 0.376200Epoch 3, CIFAR-10 Batch 1: Loss: 1.5826 Validation Accuracy: 0.402200Epoch 4, CIFAR-10 Batch 1: Loss: 1.3942 Validation Accuracy: 0.424400Epoch 5, CIFAR-10 Batch 1: Loss: 1.3691 Validation Accuracy: 0.420000Epoch 6, CIFAR-10 Batch 1: Loss: 1.2496 Validation Accuracy: 0.449800Epoch 7, CIFAR-10 Batch 1: Loss: 1.1506 Validation Accuracy: 0.463600Epoch 8, CIFAR-10 Batch 1: Loss: 1.1062 Validation Accuracy: 0.499600Epoch 9, CIFAR-10 Batch 1: Loss: 1.0401 Validation Accuracy: 0.489200Epoch 10, CIFAR-10 Batch 1: Loss: 1.0257 Validation Accuracy: 0.503600Fully Train the Model

Now that you got a good accuracy with a single CIFAR-10 batch, try it with all five batches.

"""DON'T MODIFY ANYTHING IN THIS CELL"""save_model_path = './image_classification'

print('Training...')with tf.Session() as sess: # Initializing the variables sess.run(tf.global_variables_initializer())

# Training cycle for epoch in range(epochs): # Loop over all batches n_batches = 5 for batch_i in range(1, n_batches + 1): for batch_features, batch_labels in helper.load_preprocess_training_batch(batch_i, batch_size): train_neural_network(sess, optimizer, keep_probability, batch_features, batch_labels) print('Epoch {:>2}, CIFAR-10 Batch {}: '.format(epoch + 1, batch_i), end='') print_stats(sess, batch_features, batch_labels, cost, accuracy)

# Save Model saver = tf.train.Saver() save_path = saver.save(sess, save_model_path)Training...Epoch 1, CIFAR-10 Batch 1: Loss: 1.9986 Validation Accuracy: 0.308000Epoch 1, CIFAR-10 Batch 2: Loss: 1.7854 Validation Accuracy: 0.361200Epoch 1, CIFAR-10 Batch 3: Loss: 1.6440 Validation Accuracy: 0.382800Epoch 1, CIFAR-10 Batch 4: Loss: 1.5195 Validation Accuracy: 0.434200Epoch 1, CIFAR-10 Batch 5: Loss: 1.5969 Validation Accuracy: 0.447800Epoch 2, CIFAR-10 Batch 1: Loss: 1.6263 Validation Accuracy: 0.465000Epoch 2, CIFAR-10 Batch 2: Loss: 1.4340 Validation Accuracy: 0.472000Epoch 2, CIFAR-10 Batch 3: Loss: 1.3365 Validation Accuracy: 0.477800Epoch 2, CIFAR-10 Batch 4: Loss: 1.3107 Validation Accuracy: 0.505600Epoch 2, CIFAR-10 Batch 5: Loss: 1.3488 Validation Accuracy: 0.512200Epoch 3, CIFAR-10 Batch 1: Loss: 1.4940 Validation Accuracy: 0.505200Epoch 3, CIFAR-10 Batch 2: Loss: 1.2058 Validation Accuracy: 0.500600Epoch 3, CIFAR-10 Batch 3: Loss: 1.1302 Validation Accuracy: 0.518800Epoch 3, CIFAR-10 Batch 4: Loss: 1.2064 Validation Accuracy: 0.547200Epoch 3, CIFAR-10 Batch 5: Loss: 1.2112 Validation Accuracy: 0.523600Epoch 4, CIFAR-10 Batch 1: Loss: 1.2592 Validation Accuracy: 0.539600Epoch 4, CIFAR-10 Batch 2: Loss: 1.0458 Validation Accuracy: 0.547400Epoch 4, CIFAR-10 Batch 3: Loss: 0.9999 Validation Accuracy: 0.541400Epoch 4, CIFAR-10 Batch 4: Loss: 1.0883 Validation Accuracy: 0.553600Epoch 4, CIFAR-10 Batch 5: Loss: 1.0886 Validation Accuracy: 0.563800Epoch 5, CIFAR-10 Batch 1: Loss: 1.0814 Validation Accuracy: 0.558600Epoch 5, CIFAR-10 Batch 2: Loss: 0.9006 Validation Accuracy: 0.561400Epoch 5, CIFAR-10 Batch 3: Loss: 0.8974 Validation Accuracy: 0.566200Epoch 5, CIFAR-10 Batch 4: Loss: 1.0097 Validation Accuracy: 0.580400Epoch 5, CIFAR-10 Batch 5: Loss: 0.8654 Validation Accuracy: 0.581800Epoch 6, CIFAR-10 Batch 1: Loss: 1.0644 Validation Accuracy: 0.569800Epoch 6, CIFAR-10 Batch 2: Loss: 0.8089 Validation Accuracy: 0.559200Epoch 6, CIFAR-10 Batch 3: Loss: 0.8420 Validation Accuracy: 0.579000Epoch 6, CIFAR-10 Batch 4: Loss: 0.9918 Validation Accuracy: 0.600800Epoch 6, CIFAR-10 Batch 5: Loss: 0.8985 Validation Accuracy: 0.595600Epoch 7, CIFAR-10 Batch 1: Loss: 1.0379 Validation Accuracy: 0.586200Epoch 7, CIFAR-10 Batch 2: Loss: 0.7342 Validation Accuracy: 0.591000Epoch 7, CIFAR-10 Batch 3: Loss: 0.6624 Validation Accuracy: 0.588000Epoch 7, CIFAR-10 Batch 4: Loss: 0.8465 Validation Accuracy: 0.591000Epoch 7, CIFAR-10 Batch 5: Loss: 0.8206 Validation Accuracy: 0.596000Epoch 8, CIFAR-10 Batch 1: Loss: 0.8433 Validation Accuracy: 0.590400Epoch 8, CIFAR-10 Batch 2: Loss: 0.6875 Validation Accuracy: 0.590400Epoch 8, CIFAR-10 Batch 3: Loss: 0.6787 Validation Accuracy: 0.581600Epoch 8, CIFAR-10 Batch 4: Loss: 0.7779 Validation Accuracy: 0.610800Epoch 8, CIFAR-10 Batch 5: Loss: 0.7793 Validation Accuracy: 0.587000Epoch 9, CIFAR-10 Batch 1: Loss: 0.8665 Validation Accuracy: 0.595800Epoch 9, CIFAR-10 Batch 2: Loss: 0.6141 Validation Accuracy: 0.600800Epoch 9, CIFAR-10 Batch 3: Loss: 0.5512 Validation Accuracy: 0.610200Epoch 9, CIFAR-10 Batch 4: Loss: 0.7600 Validation Accuracy: 0.607000Epoch 9, CIFAR-10 Batch 5: Loss: 0.7141 Validation Accuracy: 0.590000Epoch 10, CIFAR-10 Batch 1: Loss: 0.8153 Validation Accuracy: 0.624600Epoch 10, CIFAR-10 Batch 2: Loss: 0.5526 Validation Accuracy: 0.608800Epoch 10, CIFAR-10 Batch 3: Loss: 0.4789 Validation Accuracy: 0.615600Epoch 10, CIFAR-10 Batch 4: Loss: 0.6982 Validation Accuracy: 0.614000Epoch 10, CIFAR-10 Batch 5: Loss: 0.6622 Validation Accuracy: 0.617400Checkpoint

The model has been saved to disk.

Test Model

Test your model against the test dataset. This will be your final accuracy. You should have an accuracy greater than 50%. If you don’t, keep tweaking the model architecture and parameters.

"""DON'T MODIFY ANYTHING IN THIS CELL"""%matplotlib inline%config InlineBackend.figure_format = 'retina'

import tensorflow as tfimport pickleimport helperimport random

# Set batch size if not already settry: if batch_size: passexcept NameError: batch_size = 64

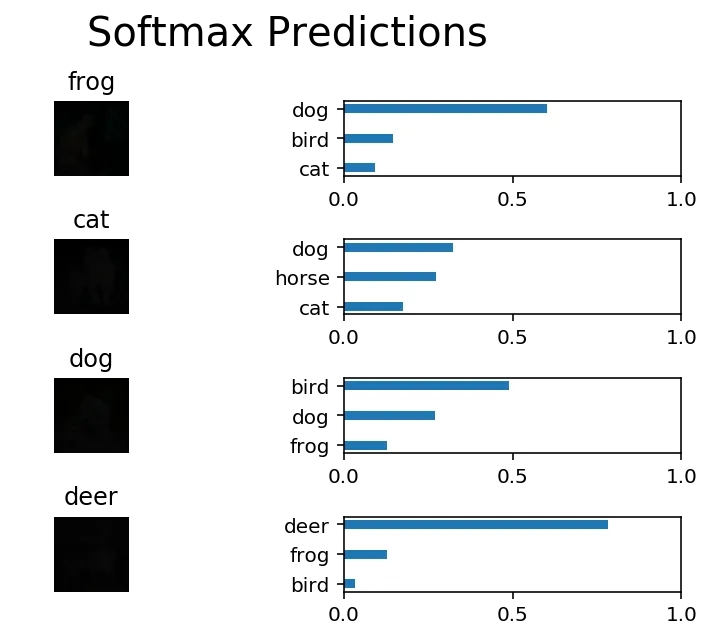

save_model_path = './image_classification'n_samples = 4top_n_predictions = 3

def test_model(): """ Test the saved model against the test dataset """

test_features, test_labels = pickle.load(open('preprocess_training.p', mode='rb')) loaded_graph = tf.Graph()

with tf.Session(graph=loaded_graph) as sess: # Load model loader = tf.train.import_meta_graph(save_model_path + '.meta') loader.restore(sess, save_model_path)

# Get Tensors from loaded model loaded_x = loaded_graph.get_tensor_by_name('x:0') loaded_y = loaded_graph.get_tensor_by_name('y:0') loaded_keep_prob = loaded_graph.get_tensor_by_name('keep_prob:0') loaded_logits = loaded_graph.get_tensor_by_name('logits:0') loaded_acc = loaded_graph.get_tensor_by_name('accuracy:0')

# Get accuracy in batches for memory limitations test_batch_acc_total = 0 test_batch_count = 0

for train_feature_batch, train_label_batch in helper.batch_features_labels(test_features, test_labels, batch_size): test_batch_acc_total += sess.run( loaded_acc, feed_dict={loaded_x: train_feature_batch, loaded_y: train_label_batch, loaded_keep_prob: 1.0}) test_batch_count += 1

print('Testing Accuracy: {}\n'.format(test_batch_acc_total/test_batch_count))

# Print Random Samples random_test_features, random_test_labels = tuple(zip(*random.sample(list(zip(test_features, test_labels)), n_samples))) random_test_predictions = sess.run( tf.nn.top_k(tf.nn.softmax(loaded_logits), top_n_predictions), feed_dict={loaded_x: random_test_features, loaded_y: random_test_labels, loaded_keep_prob: 1.0}) helper.display_image_predictions(random_test_features, random_test_labels, random_test_predictions)

test_model()INFO:tensorflow:Restoring parameters from ./image_classificationTesting Accuracy: 0.6204509493670886

Why 50-80% Accuracy?

You might be wondering why you can’t get an accuracy any higher. First things first, 50% isn’t bad for a simple CNN. Pure guessing would get you 10% accuracy. That’s because there are many more techniques that can be applied to your model and we recemmond that once you are done with this project, you explore!